Using Terragrunt to deploy a backend for remote state files enables you to write infrastructure code without the need to copy and paste the backend configuration into various module locations allowing you to keep your code DRY.

We will walk through creating remote_state to an AWS S3 backend with Terragrunt based on their quickstart guide. Then we will use that remote state file to programmatically stop and start the instance using Terraform output for the instance id and boto3 with python.

Deploying an S3 remote state backend and EC2 Instance with Terragrunt

To try this out yourself you can view and clone this example repo.

git clone https://github.com/cloudtruth/blog-examples.gitAfter cloning the repo change the active directory for this walkthrough:

cd blog-examples/terragrunt-remote-stateThis example has the following folder structure which will configure an S3 bucket and deploy an instance with remote state using the deployed bucket.

# terragrunt-remote-state

├── stage

│ ├── instance

│ │ └── main.tf

│ │ └── terragrunt.hcl

│ └── terragrunt.hclThe backend configuration is defined once in the root terragrunt.hcl file. It will create a dynamodb lock table called my-lock-table and an S3 backend. You can update the bucket config YOUR_UNIQUE_BUCKET_NAME with a valid unique name to follow along.

# stage/terragrunt.hcl

remote_state {

backend = "s3"

generate = {

path = "backend.tf"

if_exists = "overwrite_terragrunt"

}

config = {

bucket = "YOUR_UNIQUE_BUCKET_NAME"

key = "${path_relative_to_include()}/terraform.tfstate"

region = "us-east-1"

encrypt = true

dynamodb_table = "my-lock-table"

}

}The instance folder contains a terragrunt.hcl file that contains the Terragrunt helper find_in_parent_folders() which inherits the remote_state configuration from the root terragrunt.hcl file in the directory tree.

# stage/instance/terragrunt.hcl

include {

path = find_in_parent_folders()

}The instance folder also contains a main.tf. This Terraform code will configure an EC2 instance in us-east-1 and specify outputs for the instance_id and public ip.

# stage/instance/main.tf

provider "aws" {

profile = "default"

region = "us-east-1"

}

resource "aws_instance" "app_server" {

ami = "ami-0aeeebd8d2ab47354"

instance_type = "t2.micro"

tags = {

Name = "RemoteStateInstance"

}

}

output "instance_id" {

description = "ID of the EC2 instance"

value = aws_instance.app_server.id

}

output "instance_public_ip" {

description = "Public IP address of the EC2 instance"

value = aws_instance.app_server.public_ip

}Now you can change directory to the instance folder and run terragrunt apply.

cd stage/instance

terragrunt apply -auto-approve --terragrunt-non-interactive

A backend.tf file is dynamically generated by Terragrunt in the instance folder. This Terraform file contains the backend details that create the S3 bucket and becomes the destination for the terraform.tfstate file!

Using Python with Boto3 to stop and start the AWS Instance

Boto3 is an AWS python SDK that will let us programmatically interact with AWS services and can be configured for authentication to your AWS account with the AWS CLI or a credentials file.

For this example we are going to use boto3 to stop and start the deployed AWS instance using the instance_id we set as a Terraform output in our Terragrunt deploy.

The following Python script located in blog-examples/terragrunt-remote-state will take an environment variable S3_INSTANCE_ID and provide the user with options to stop or start the instance with the supplied id.

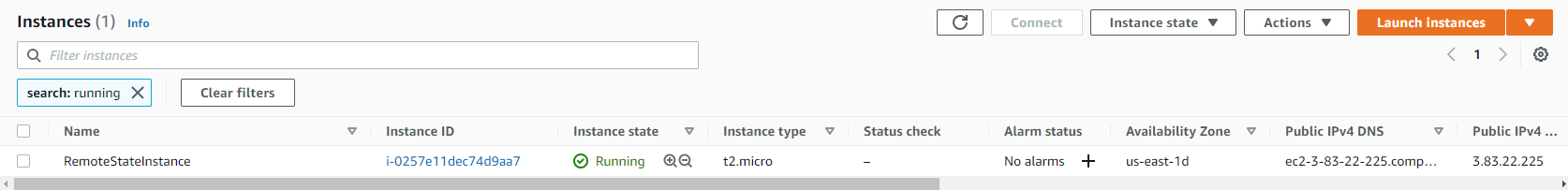

The instance id can be obtained from the terragrunt output command or by reviewing the state file in S3.

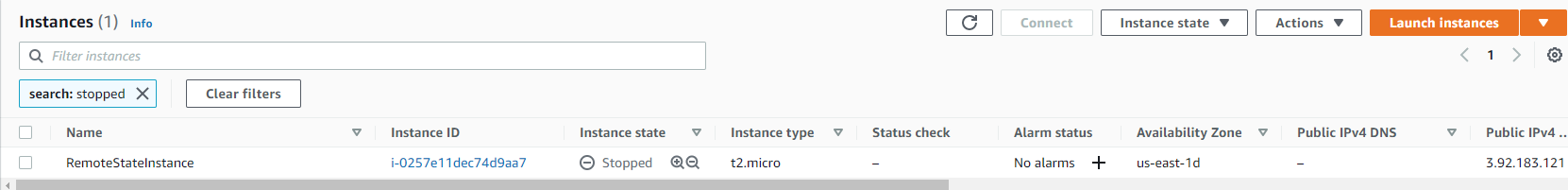

instance_id = "i-0257e11dec74d9aa7" instance_public_ip = "3.92.183.121"

You can export the instance id as an environment variable in your local environment and the script will read it with os.environ.get.

export S3_INSTANCE_ID=i-0257e11dec74d9aa7

Now executing the script with the --stop flag will look for the specific instance in the environment and stop it in EC2. If the id you provide for the variable S3_INSTANCE_ID is not in your AWS region then the script will exit.

python instance_tfstate.py --stop

i-0257e11dec74d9aa7

Success {'StoppingInstances': [{'CurrentState': {'Code': 64, 'Name': 'stopping'}, 'InstanceId': 'i-0257e11dec74d9aa7', 'PreviousState': {'Code': 16, 'Name': 'running'}}], 'ResponseMetadata': {'RequestId': 'cf7721d9-1a6f-4baf-bf45-770e28936d23', 'HTTPStatusCode': 200, 'HTTPHeaders': {'x-amzn-requestid': 'cf7721d9-1a6f-4baf-bf45-770e28936d23', 'cache-control': 'no-cache, no-store', 'strict-transport-security': 'max-age=31536000; includeSubDomains', 'content-type': 'text/xml;charset=UTF-8', 'content-length': '579', 'date': 'Tue, 14 Sep 2021 18:22:30 GMT', 'server': 'AmazonEC2'}, 'RetryAttempts': 0}}

Using Terragrunt remote state outputs with dynamic changes

Rather than manually adding instance ID’s to your environment whenever your configuration or environment changes you can use CloudTruth to dynamically pickup changes from the Terraform remote state file. This means if you apply and delete the EC2 instance you can automatically pick up new instance id from the remote state file with no code or environment changes.

CloudTruth allows you to read directly from a remote state file stored in S3 and programmatically access anything within the state file as a parameter by integrating directly with AWS S3.

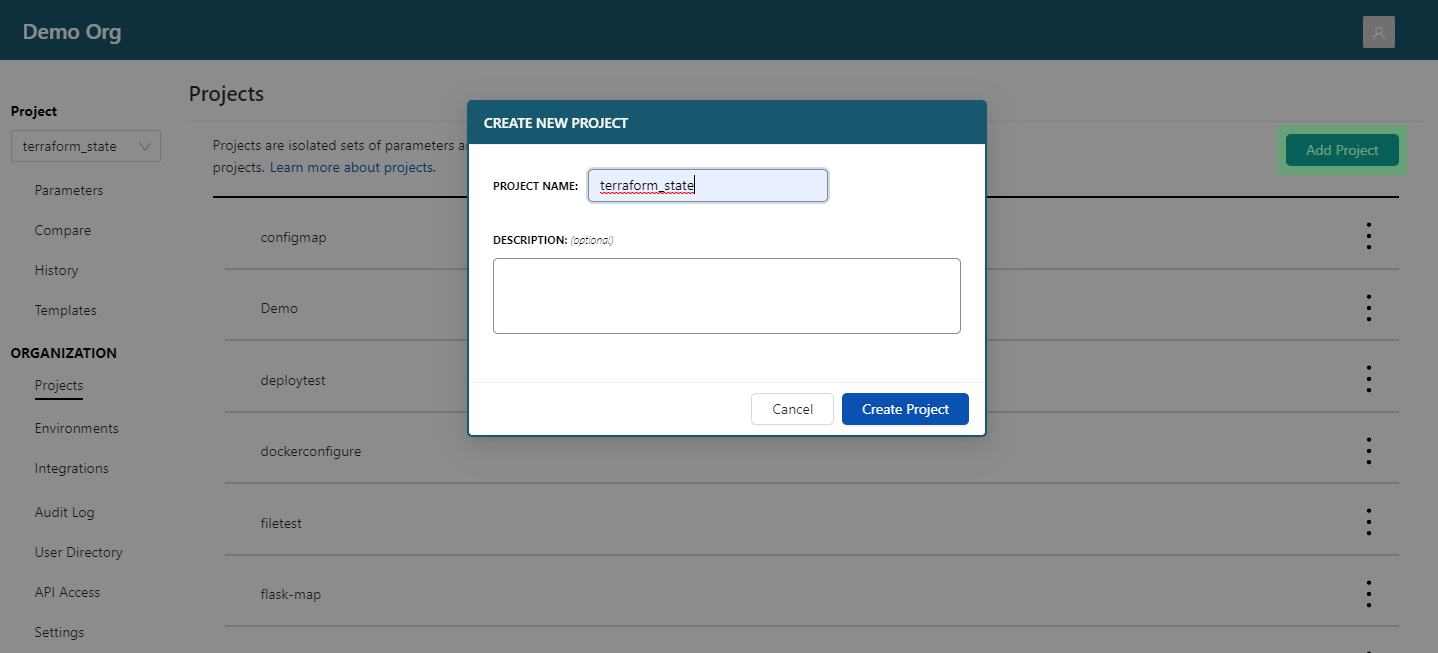

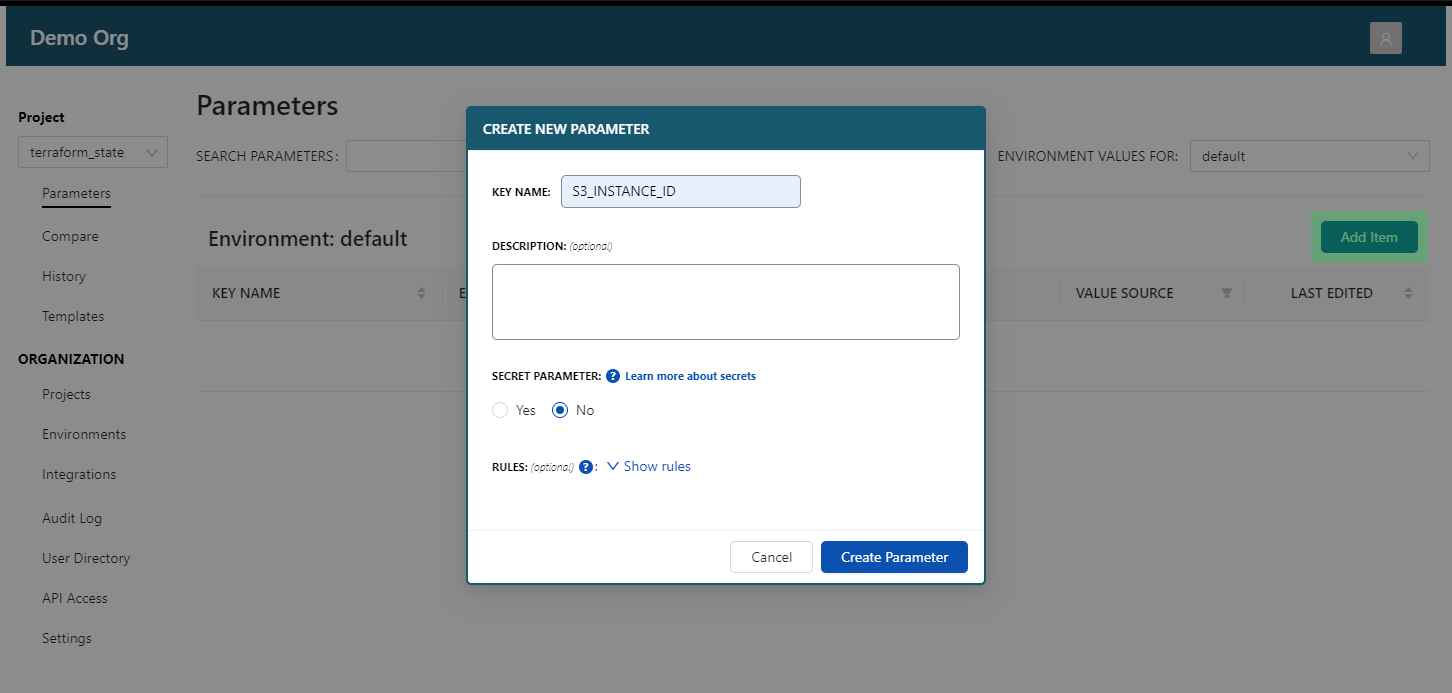

We can setup a CloudTruth Project and reference the instance_id value with the CloudTruth CLI or the UI. The following CLI commands create the project and our parameter that has our dynamic instance id.

cloudtruth project set terraform_state

You can put your integrated account and bucket name in the CLI.

cloudtruth --project terraform_state parameter set S3_INSTANCE_ID --fqn aws://YOUR_ACCOUNT/us-west-2/s3/?r=YOUR_BUCKET/demo/instance/terraform.tfstate --

We use the jmespath outputs.instance_id.value to pull the value directly out of the state file. The state file output looks like this:

"outputs": {

"instance_id": {

"value": "i-0257e11dec74d9aa7",

"type": "string"

}

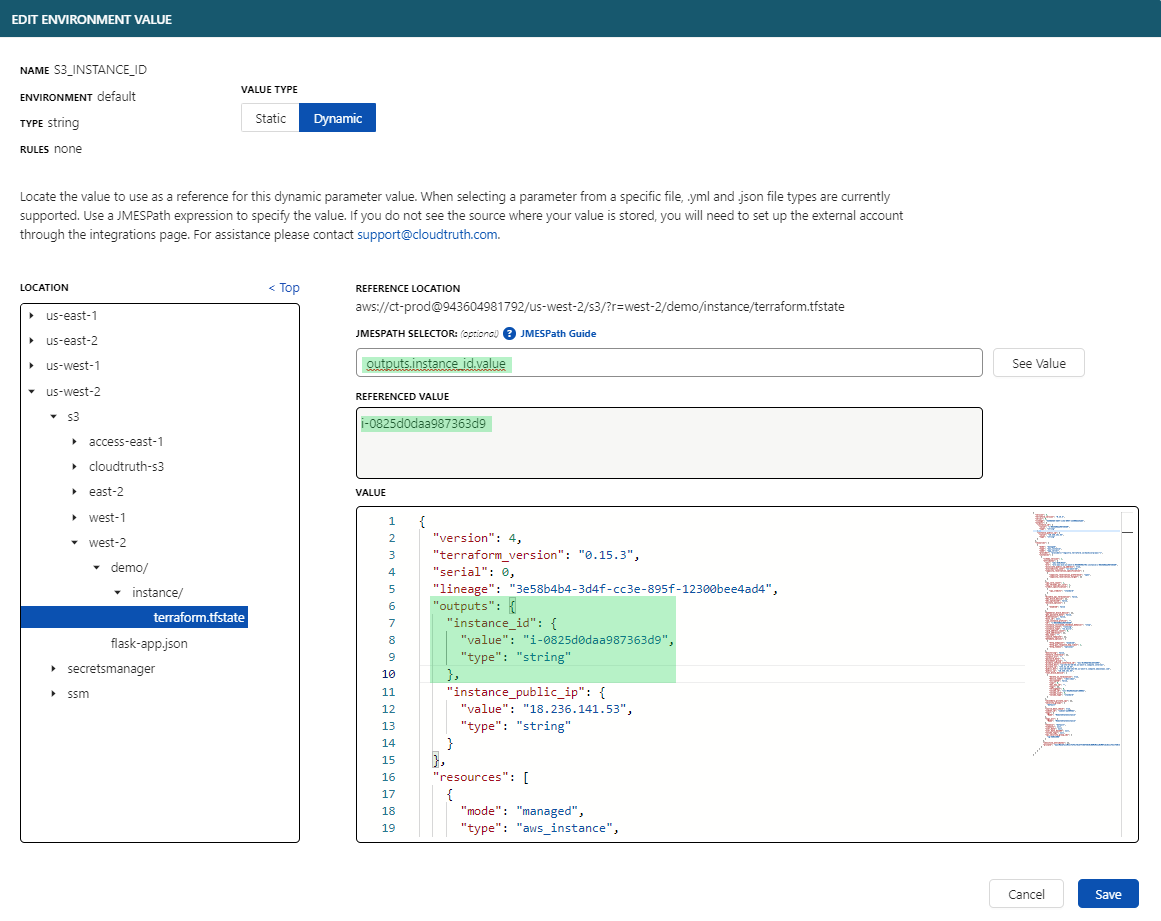

The CloudTruth UI

From the projects page create a new project called terraform_state.

Add a new parameter named S3_INSTANCE_ID.

Set a Dynamic value as the S3 bucket destination path YOUR_UNIQUE_BUCKET_NAME/instance/terraform.tfstate in us-east-1 and use the JMESPATH selector outputs.instance_id.value. Hit save to set the value to the instance id output from the remote state file.

Now you can use our Rest API or the CloudTruth run command to pass the Terraform remote state output directly to your infrastructure scripts!

This example uses CloudTruth run which pulls in the environment from the project terraform_state we created.

cloudtruth --project terraform_state run -- python instance_tfstate.py --start

i-0257e11dec74d9aa7

Success {'StartingInstances': [{'CurrentState': {'Code': 0, 'Name': 'pending'}, 'InstanceId': 'i-0257e11dec74d9aa7', 'PreviousState': {'Code': 80, 'Name': 'stopped'}}], 'ResponseMetadata': {'RequestId': '418a4c71-4c0f-49d6-a510-7d56f90207eb', 'HTTPStatusCode': 200, 'HTTPHeaders': {'x-amzn-requestid': '418a4c71-4c0f-49d6-a510-7d56f90207eb', 'cache-control': 'no-cache, no-store', 'strict-transport-security': 'max-age=31536000; includeSubDomains', 'content-type': 'text/xml;charset=UTF-8', 'content-length': '579', 'date': 'Tue, 14 Sep 2021 18:43:05 GMT', 'server': 'AmazonEC2'}, 'RetryAttempts': 0}}

The instance is now Running in AWS EC2!

Don’t forget to cleanup your infrastructure with terragrunt destroy. You will need to manually delete the Terragrunt created S3 bucket as the wrapper does not provide a way to delete generated backends.

Summary

We walked through how to deploy an AWS S3 backend with remote state and deployed an EC2 instance in the AWS free tier with Terragrunt’s DRY deploy methodology. We then leveraged the instance id output with a sample Python script using boto3 that can stop and start the instance with a manual environment variable and reviewed how we can dynamically get Terraform state output with CloudTruth.

Join ‘The Pipeline’

Our bite-sized newsletter with DevSecOps industry tips and security alerts to increase pipeline velocity and system security.